Conflict Superintelligence

AI will be smarter than humans, but will it escalate or de-escalate human conflict?

It now seems likely that machines will soon become much smarter than humans. There is the very real possibility of vastly improving a great many aspects of the human experience by integrating intelligent machines into society.

But will our superintelligent machines finally bring an end to war?

I am often shocked that world peace is so seldom mentioned in utopian visions of a world transformed by AI. (Amodei mentions it, but Altman and other influential writers do not.) The end of war is, after all, an age old dream, so deeply human that greetings and toasts in many languages literally mean “peace.”

It seems like greater intelligence should help us solve the problems of conflict. Many people who have studied the classic prisoner’s dilemma feel that defecting, although defined as “rational” under standard game theory, is actually quite dumb. Just so with starting a war. Destructive conflict is usually something that no one wants, yet no one feels able to avoid. Surely superintelligence can help us find a way out. Could we hope for anything more important from benevolent machines?

There is a missing piece in our vision of superintelligence: the ability to defuse and transform human conflict.

Peace is not the default

There are worrying signs that the path we are on will not lead to the utopian outcome of a low-conflict world.

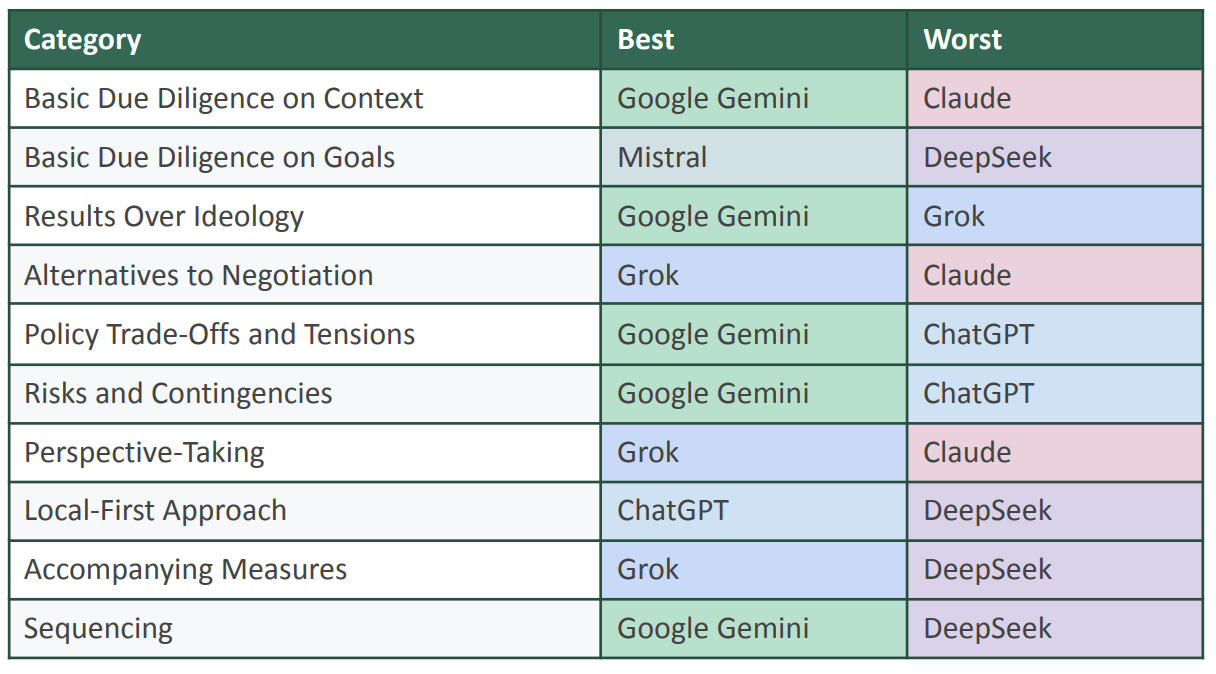

Today’s AI gives bad conflict resolution advice, graded just 2.7 out of 10 when tested by a conflict research organization. Current models score badly on dimensions such as “results over ideology,” “risks and contingencies” and “basic due diligence.”

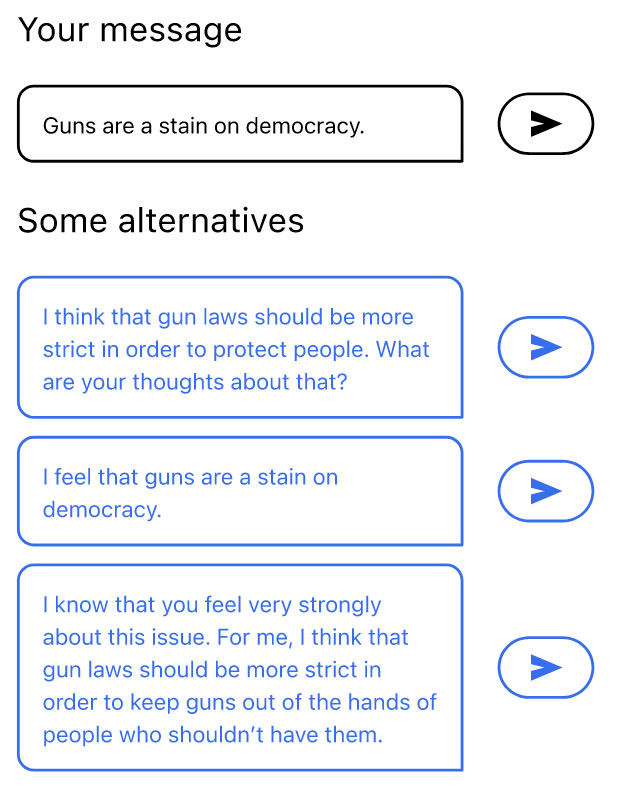

Today’s AI is sycophantic, agreeing with users even when it shouldn’t. This causes them to feel like they’re more in the right and makes them less willing to apologize.

Today’s AI tells different facts to different conflict parties. Narratives vary by language, and death tolls are lower when the question is asked from the attacker’s point of view.

And in wargaming tests, current models chose to use nuclear weapons in 95% of scenarios. The machines do not yet know how to escape our collective conflict trap.

The history of social media is a valuable parallel. It’s now well established (both in theory and in practice) that optimizing for engagement will increase societal polarization. It took about 15 years from the first evidence of polarization on social media to the demonstration of practical techniques to counter it — techniques which are yet to be widely adopted.

This time the consequences of being slow or wrong may be far worse. If AI is not optimized for peace, it is very likely to hasten war. These issues are more critical than ever as AI is integrated into all levels of political decision-making. Militaries around the world are now relying on chatbots for conflict advice, yet their escalatory tendencies are completely untested.

Conflict transformation

As the UN has concluded, the best way to stop a war is to prevent it. This means we have to attend to conflict at pre-violent stages, and conflict is always all around us. This is a key point: while it may seem like everyday AI use has nothing to do with war, it absolutely does, because it’s the accumulation of repeated polarizing interactions that eventually breaks into violence.

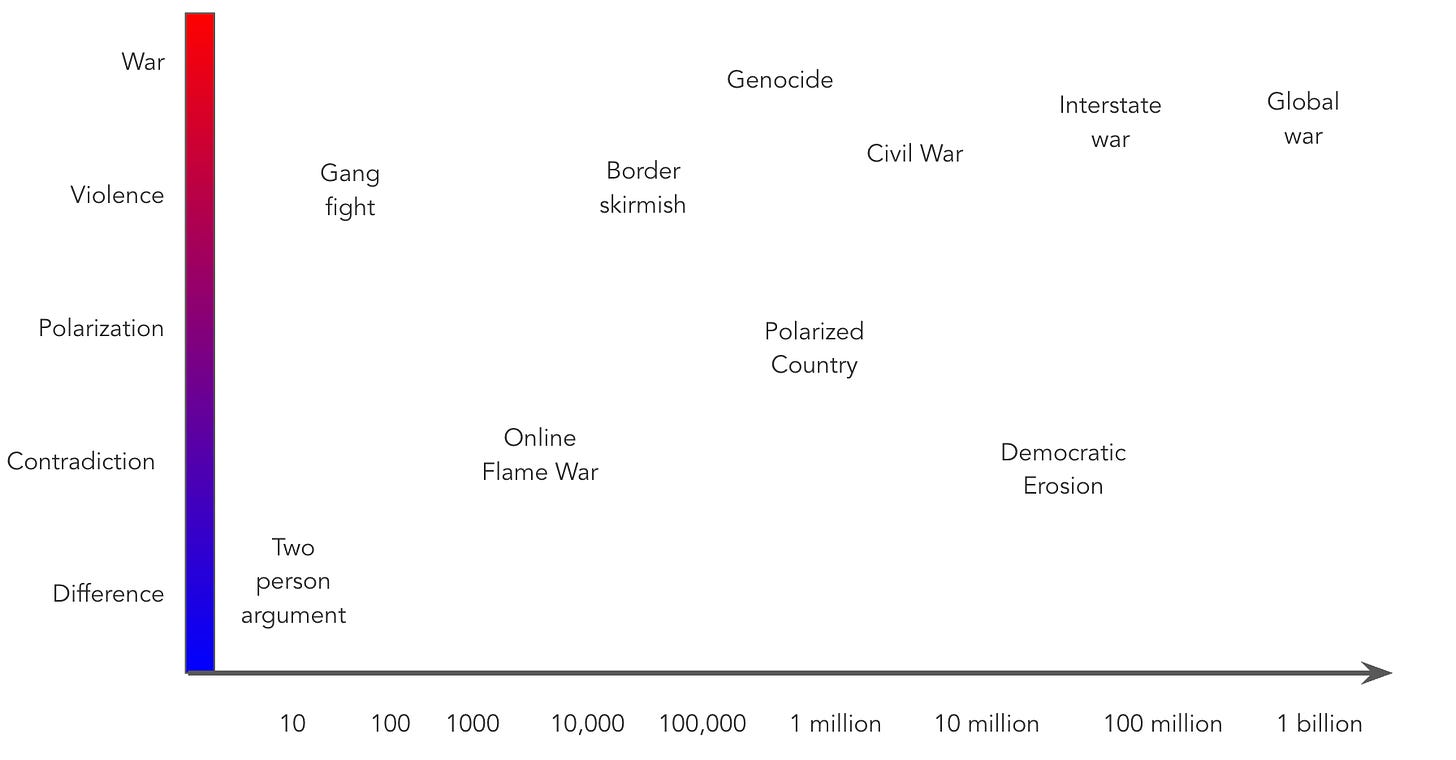

I like to visualize conflict on two axes: intensity and scale, meaning how many people are involved. In the lower left is an argument between two people, in the upper right a global war.

If left unmanaged, conflicts naturally tend to escalate in both scale and scope. AI should help us stay towards the lower left of this graph.

But that doesn’t mean the elimination of all conflict. Sometimes conflict is healthy. It’s often part of how people and societies change for the better, such as by challenging injustice. The important distinction is not whether people agree, but what happens when they disagree. The theory of “deliberative democracy” says that we’ll all get together to find a rational solution. The theory of “agonistic democracy” says no, there are fundamental incompatibilities between sides. The reality is something of both: we sometimes do work out smart solutions and agreeable compromises, but at other times no middle ground is possible and eventually someone must yield — peacefully.

The goal, then, is not to suppress or eliminate conflict but to change its nature. This philosophy is known as conflict transformation (and it’s the basic premise of the Better Conflict Bulletin). If we want to prevent war, we must find ways to contain, manage, and transform disagreements long before they escalate to violence. Democracy itself can be seen as a conflict mediation mechanism. Rather than have our leaders fight each other to the death, we vote.

And it’s not just politics. Conflict exists in all spheres of life, from the personal to the commercial, legal, medical, and scientific — wherever humans relate. A huge number of people are already relying on chatbots for conflict resolution advice. If compassion isn’t enough, perhaps economics can motivate us to work on the general problem of conflict. Imagine how much more productive a company could be if its workforce wasn’t fighting itself. Or rather, if disagreement and competition was productive instead of destructive.

Empowerment and cooperation are not enough

Andy Hall has eloquently made the case for political superintelligence, AI systems which help us with governance in a variety of ways:

By political superintelligence, I do not mean a system that magically solves politics for us; I mean tools that help citizens, representatives, and institutions perceive reality more sharply, understand tradeoffs, contest power, and act more effectively.

This is an excellent idea. More than a decade ago, I wrote an essay on political journalism arguing that the whole point was to empower citizens to create the society they wanted. I stand by that assessment, but I now believe that both of these visions are missing a crucial piece: there must be some mechanism to mediate between competing people and their competing hopes for the future world.

Current AI development seems to be making a similar mistake. Millions of dollars are flowing into projects and companies that promise unleash a new era of AI-mediated cooperation. And I believe in the upside! I really do think that AI can enable new and powerful ways for people to work together. However, first those people have to want to work together. There seems to be no plan for what to do when relationships break down. Sometimes people start to distrust, dislike, and hurt each other. Merely “cooperative” AI will never be able to solve Congressional gridlock.

Even our theories of AI alignment mostly assume that the humans involved are “collegial,” leaving the more realistic case of conflict for future work. Lots and lots of people have suggested that AI should be designed to empower us, but far fewer have considered what AI should do when we disagree — potentially violently. If we don’t close this gap, we will only create a world where more empowered people are having bigger fights.

AI shows promise for conflicts

The maddening thing about this situation is that modern AI looks like it might actually be pretty good at conflict resolution, when applied in the right way.

In one experiment, AI moderators proved slightly better then human at writing a consensus policy decision that diverse people could agree on. AI can successfully mediate difficult chat conversations, and train mediators. Recently, my team showed that LLM-based social media algorithms can reduce polarization in a 10,000 person field test.

And it’s being tried in the real world too. AI was used to transcribe and synthesize citizens’ views in Yemen, and is being used to build rapid-response classifiers for social media conflict monitoring. Very soon, we will see AI mediators tested in conflict zones.

In other words, this technology can change conflicts positively. In fact, it might be completely transformative. Professional mediators are scarce and expensive, and the widespread use of chatbots for personal conflicts shows that there’s way more demand than supply of effective conflict help.

A conflict superintelligence roadmap

On the one hand, humans have been struggling with conflict for all of their existence, so it is hubris to imagine that we can “solve” it now. On the other hand, we have made enormous progress. Conflict is mostly contained by social technology such as institutions, practices, and norms. We can change those, and AI can help.

To do this, major AI developers will need to ensure their models handle conflict constructively, while conflict professionals will need specialized tools. While there are big differences in incentives and deployment between these two cases, I suspect that they require essentially the same underlying technology. Here are some key directions we could get started on right now:

Metrics and monitoring. We have to choose measures that best indicate escalation (there are many candidates) and monitor real AI deployments for such dynamics. This will also generate ground truth outcome data.

AI-based conflict forecasting tournaments. Current conflict forecasts use ML but not LLMs, limiting the amount of context they can integrate. Conflict prediction is extraordinarily useful by itself, and can be used as a reward model to train AI systems.

Pre-deployment evaluations. We need validated ways — based on the data collected above — to know in advance if a model will tend to smooth things out between people, or let them spiral out of control.

Mitigations to prevent flash wars, where systems of autonomous agents are jointly unstable and escalate quickly.

Definitions and evaluations for “neutrality” that apply to any conflict, at any scale, anywhere in the world. This is key to having both trustworthy and trusted AI. I’m working on this with my UC Berkeley colleauges right now.

But the most important project of all might simply to put AI in contact with human conflict and collect the data. I’ve been imagining PeaceBot, a model trained on large amounts of both conflict theory and practice, including as many case studies as possible. It would both give conflict advice and act as an always-available mediator for conflict parties — providing state of the art conflict resolution help for free. The trick is learning from these interactions. Over and over, try to help the humans, save the transcripts, evaluate the results, look for patterns. Then adjust, do it a little differently next time, all in the hope that we’ll build a world where humans can, more often than not, settle their disputes peacefully.

If we can’t cooperate, then everything else is harder. Perhaps there’s nothing more critical for superintelligence to do than figure out how to help us all get along.

This is a thoughtful and genuinely important contribution to a conversation that too few people in the AI development world are taking seriously. The concern that AI is being deployed in conflict contexts without adequate theory, without adequate testing, and with potentially catastrophic escalatory tendencies is well-founded and urgent. The wargaming results alone — nuclear weapons chosen in 95% of scenarios — should give pause to anyone who believes current models are ready for high-stakes conflict applications. And the parallel to social media's polarization problem is apt: we have roughly fifteen years of evidence that optimizing for engagement produces societal harm, and we are now building systems with far greater reach and consequence before we have solved the equivalent problem for conflict.

That said, there is a foundational challenge the essay doesn't yet address, and it may be the most consequential one. I worked at the UN for a decade and have spent a career in peace and security issues, so this is food for thought: The framework rests on an assumption that conflict has a knowable universal structure — that peace is a shared terminal value, that resolution is a common goal, and that better information and mediation will move parties toward it. But this assumption is itself a cultural premise, not a discovered fact.

In 1959, McDougal and Lasswell warned against "make-believe universalism" that assumed universal words implied universal deeds.

Adda Bozeman spent decades demonstrating that conflict is constituted differently across civilizational systems — with different premises about what conflict is for, what resolution means, and whether peace is a desirable end state at all. The questions she proposed for any serious analysis of a foreign society cut directly to what AI conflict systems currently cannot ask:

• What is the value content of intrigue and conflict?

• In which circumstances is violence condoned, and what is the ceiling for tolerance of violence within this society?

• Is law distinct from religion and from political authority?

• Is war considered "bad" by definition — or is it accepted as a norm or way of life?

• And most fundamentally: How do people think about peace? Is it a definable condition, and what is its relation to war?

These are not exotic edge cases.

The Hamas Charter does not treat peace as a terminal value — it treats Jihad as a religious obligation, making certain forms of resolution not merely undesirable but categorically impermissible under its own cosmology. Lenin deliberately inverted Clausewitz: war was not the continuation of politics by other means but the engine through which history itself moved, making conflict intrinsically generative rather than regrettable. The Nazis didn't want peace — they wanted struggle, because struggle was constitutive of their worldview.

An AI conflict system that cannot ask Bozeman's questions cannot distinguish these systems of meaning from ones in which conflict transformation is genuinely possible — and will intervene in all of them using the same framework, producing confident recommendations that are systematically misread by the very communities they're designed to reach.

What's needed is not a better universal model but a revival of the lost agenda that Bozeman, Lasswell, and Sherman Kent were advancing before it was displaced by quantitative peace research in the 1960s and abandoned by anthropology after Vietnam: the disciplined, community-grounded study of how specific societies constitute meaning around conflict, violence, authority, and resolution, pursued as a prerequisite to any intervention rather than as optional context.

The peace research tradition didn't have to go the direction it did — toward computation, game theory, and universal rationality assumptions. It could have gone the direction McDougal and Lasswell pointed, toward the rigorous comparative appraisal of diverse systems of public order on their own terms. AI conflict work is now replicating the same fateful choice, and with far greater consequences if it gets it wrong.

The alternative is not to abandon the ambition — conflict transformation at scale matters enormously (!) — but rather to ground it in the kind of situated inquiry that generates an explicit, defensible account of why a specific intervention should work among these people, in this place, given what we actually know about how they understand obligation, legitimacy, violence, and the meaning of peace itself. Without that grounding, even the most technically sophisticated AI conflict tool risks producing what might be called pathological resolutions — closing the presenting crisis while leaving its civilizational foundations entirely intact, and calling that peace.

That false confidence can lead to war. And historically, it does.

thanks for all you're doing to advance this line of inquiry. looking forward to hearing more during The Big Middle LIVE on Weds May 6 at Noon Central